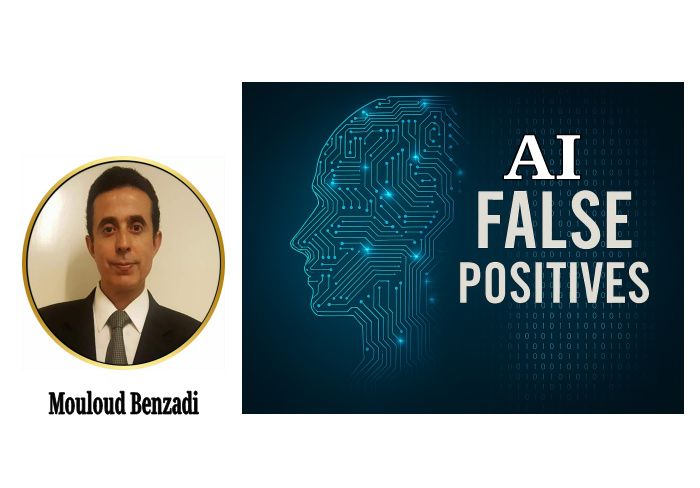

As highlighted in our last piece, the alarm raised by students, professionals, and authors regarding AI detectors was not a false alarm. It is supported by growing scientific evidence. More recent research shows that AI writing detectors—introduced with the promise of safeguarding academic and professional integrity—have not only failed to fulfill that role but have also caused significant harm to reputations, students’ academic progress and future opportunities, and livelihoods. Far from being neutral and efficient tools, they have proven to be inconsistent, biased, and systemically flawed, raising urgent ethical and practical questions about their continued use.

Let us examine some of this evidence.

Persistent Unreliability in AI Detection

Emerging research reveals that AI detectors are far less reliable than widely assumed. One recent academic study, Almost AI, Almost Human: The Challenge of Detecting AI-Polished Writing, reveals shocking results.

Researchers Shoumik Saha and Soheil Feizi of the University of Maryland, College Park (USA) said in their study published in February 2025, "Our findings reveal critical weaknesses in existing AI-text detection systems. AI-text detectors exhibit alarmingly high false positive rates, often flagging very minimally polished text as AI-generated. For instance, minimal polishing with GPT-4o can lead to detection rates ranging from 10% to 75%, depending on the detector."

Another 2026 study by researchers at Sultan Qaboos University (Mohammad Hadra, Karleen Cambridge, and Mostefa Mesbah) provides concrete data on the unreliability of AI content detectors. Their evaluation found that leading tools achieved overall accuracy rates of only 69% and 61%, with performance on hybrid human–AI texts dropping to nearly 0%. The study also revealed significant bias: accuracy for scientific texts was 28–38 percentage points lower than for humanities, demonstrating that these tools are unreliable and unfit for reliable authorship detection.

Inconsistency and Bias in AI Text Detection

Besides unreliability, inconsistency further undermines the credibility of AI‑text detectors. According to Saha and Feizi (2025), the reliability of AI‑text detectors depends on which language model performs the refinement. Text polished by smaller or older models is significantly more likely to be labeled as AI-generated than text refined by newer systems. For instance, nearly half of the passages edited with LLaMA-2-7B were classified as AI-generated, compared with roughly one quarter of those refined using DeepSeek-V3.

According to the study, detection accuracy also varies across writing domains. Speech-like or conversational content is especially prone to misclassification, while academic abstract-style writing shows comparatively stronger resistance. These patterns suggest that current detectors are not neutral or consistently reliable indicators of authorship.

Another study on consistency across detectors, Do AI Detectors Work Well Enough to Trust? (2025) by Brian Jabarian and Alex Imas of the University of Chicago Booth School of Business, found that AI-text detection accuracy varies widely depending on the tool, text length, generating model, and decision threshold.

The study Found that the performance of leading tools (GPTZero, Originality.ai, and Pangram) varied drastically. For example, Originality.ai’s false negative rate soared to 40% in some cases, while Pangram’s remained below 4%. This severe discrepancy reveals that the reliability of an AI-detection claim depends more on the chosen tool than on the text itself, highlighting the absence of a consistent, objective standard.

Failure in Detecting AI Within Structured Academic Writing

Further evidence of AI detector failure comes from a 2025 study published in Acta Neurochirurgica examining whether AI-output detectors can distinguish human-written academic prose from AI-generated scientific text. Analyzing 1,000 academic passages—including 250 verified human-authored articles and 750 AI-generated counterparts—the researchers found only moderate overall detection performance, and none of the tools achieved perfect reliability.

The study also found many false positives in genuine academic writing. Even articles known to be human‑written were wrongly flagged: one detector marked 30.4% as more than 50% likely to be AI, and another flagged 16%. This shows that the clear and structured style of scientific writing can actually increase the chance of misclassification.

Results further showed wide disagreement between detectors analyzing the same academic texts. Human-written passages received average AI-likelihood scores ranging from about 5.9% to 36.9%, while AI-generated texts often scored above 80–99%, depending on the tool used. Such variation indicates that outcomes depend heavily on detector design and thresholds rather than consistent evidence of authorship.

The study also showed that AI‑generated abstracts received almost perfect originality scores in plagiarism checks. This means that well‑structured scientific writing can pass similarity tools even when it is produced by AI, while still being difficult for detectors to classify correctly. Overall, the findings suggest that academic writing conventions do not make AI detection more stable, and that current detectors are still unreliable for determining authorship.

Systemic Bias at the Heart of AI Detectors

The limitations of AI-output detectors are not just technical mistakes in individual algorithms. Instead, they point to broader systemic problems in how these tools are designed, used, and built into institutional workflows. A 2025 review in Information summarizes extensive peer‑reviewed research on AI detection in higher education and shows that the concerns go far beyond accuracy or error rates alone.

The study shows that common detectors like Turnitin AI, GPTZero, and Copyleaks often give inconsistent results, including false positives that wrongly label human writing as AI, especially when judging advanced models like ChatGPT‑4. These errors reveal deeper structural problems, such as poor transparency in how decisions are made, shifting probability thresholds, and a limited understanding of how language differences affect accuracy.

Crucially, the review links detector performance to broader ethical, procedural, and teaching concerns. False positives and probability‑based results directly affect fairness and student rights, especially as institutions depend more on these tools for high‑stakes academic decisions. Because detectors rely on probability rather than certainty, authorship judgments can never be fully neutral or objective, even when policies suggest otherwise. As a result, these systems create risks of bias and inconsistency that fall most heavily on multilingual students, non‑native speakers, and anyone with less conventional writing styles.

The study also identifies gaps in institutional policy, uneven enforcement practices, and insufficient faculty training regarding the appropriate use and limitations of detection technologies. These findings emphasize that the central challenge is not only how accurate a tool may be, but also how its results are interpreted and applied within educational contexts.

Taken together, the evidence shows that the unreliability of AI detectors is systemic rather than merely technical, arising from the combined effects of algorithmic limitations, ethical concerns, institutional practices, and the wider sociotechnical contexts in which these tools are used.

Widespread Harm and the Collapse of Institutional Trust

Beyond technical limitations and accuracy concerns, real-world data reveals that AI-text detectors are causing significant harm, affecting vulnerable groups, particularly multilingual and non-native English writers. Research from Stanford and Arizona State showed that AI detectors often make mistakes. They falsely marked 61.22% of real TOEFL essays by non-native speakers as AI-written, with 97% flagged by at least one detector and 19% misidentified by all seven.

The impact of these false positives goes far beyond academic inconvenience. Real cases show that students wrongly accused of AI misuse often suffer serious emotional and psychological distress, such as panic, depression, and other mental health struggles. These harms also worsen existing inequalities in education, especially for international students who already face language barriers.

The level of misclassification is enormous. Even a low false-positive rate of 1%—much lower than what current AI detectors show—could lead to more than 223,500 U.S. college students each year being wrongly accused of AI misuse. With real error rates being higher, the true number is likely much greater.

An Urgent Need for a Post–AI Detector Era

The warnings raised by students, professionals, and authors about AI detectors were never false alarms. They were early signals of a serious and growing problem. They revealed a system incapable of distinguishing truth from machine-generated guesswork.

These systemic failures have prompted leading universities to abandon AI-based detection systems entirely. Institutions including Cornell, Vanderbilt, the University of Pittsburgh, and the University of Iowa have disabled or withdrawn AI detectors due to concerns about equity, unreliability, and harm to students. Their decisions reflect an emerging recognition that these tools pose more risk than benefit when used in high-stakes academic integrity processes.

Recent research now provides clear and indisputable evidence of AI detectors’ inconsistencies, biases, and fundamental unreliability. These tools have repeatedly misclassified authentic human writing, resulting in lost opportunities, false accusations, and significant emotional distress for many individuals. As the number of affected voices grows and the evidence continues to mount, there is no defensible reason for institutions to keep relying on AI detectors.

The transition toward a post–AI-detector era, as well as a post-AI-fear era, is not only inevitable but urgently necessary. Unless the world acts quickly, AI detectors will continue to cause harm to students, professionals, and authors. History will not forgive a slow response that allows this damage to persist or permits machines, already proven to be unreliable and inefficient, to judge human authorship and integrity based on an unfair system: “Guilty till proven innocent”.

The world already has alternatives: better, safer, and fairer ways of addressing AI misuse and evaluating integrity—methods grounded in human judgment, transparency, and sound pedagogical practice. AI detectors are not among them.

These alternative approaches can genuinely uphold academic, professional, and authorial integrity without harming innocent people. We will explore these approaches in our next piece.

Yusuf DeLorenzo - 3 months ago

I look forward to the next installment in this brilliant assessment of the state of AI in literature. I will especially enjoy your findings in regard to translation! Many thanks for your efforts.